Dwain Northey (Gen X)

https://www.cbsnews.com/news/naacp-travel-advisory-florida-says-state-hostile-to-black-americans/

Remember the good old days when there were only travel advisories and or ban for, what some would call, third word countries? Well now because of the vile vitriol of one Governor Ron DeSantis the state of Florida, a vacation destination, has received a travel advisory by the NAACP.

The wannabe future President has made the climate so venomous in Florida the anyone who is a part of any minority group does not feel safe in the state. Black, Brown, LGTBQ+, these are all groups that are under attack in the Sunshine State. The majority Republican legislature and their fearful leader has passed laws that make almost everything a jailable offence and the fact that the state has very loose gun laws and a stand your ground law makes it more dangerous than being a blonde female in central America.

Florida residents are able to carry concealed guns without a permit under a bill signed into law by Republican Gov. Ron DeSantis. The law, which goes into effect on July 1, means that anyone who can legally own a gun in Florida can carry a concealed gun in public without any training or background check. This with their ridiculous stand your ground law, ‘Florida’s “Stand-Your-Ground” law was passed in 2005. The law allows those who feel a reasonable threat of death or bodily injury to “meet force with force” rather than retreat. Similar “Castle Doctrine” laws assert that a person does not need to retreat if their home is attacked.’ Makes it really sketchy to go there.

This in top of the don’t say gay rule and the new trans ruling that just passed.

“Florida lawmakers have no shame. This discriminatory bill is extraordinarily desperate and extreme in a year full of extreme, discriminatory legislation. It is a cruel effort to stigmatize, marginalize and erase the LGBTQ+ community, particularly transgender youth. Let me be clear: gender-affirming care saves lives. Every mainstream American medical and mental health organization – representing millions of providers in the United States – call for age-appropriate, gender-affirming care for transgender and non-binary people.

“These politicians have no place inserting themselves in conversations between doctors, parents, and transgender youth about gender-affirming care. And at the same time that Florida lawmakers crow about protecting parental rights they make an extra-constitutional attempt to strip parents of – you guessed it! – their parental rights. The Human Rights Campaign strongly condemns this bill and will continue to fight for LGBTQ+ youth and their families who deserve better from their elected leaders.”

This law makes it possible for anyone to just accuse someone of gender affirming care to have their child taken from them this would include someone traveling from out of state. This alone justifies a travel ban to the Magic Kingdom for families.

Oh, and I haven’t even mentioned DeSantis holy war with Disney, the largest employer in the state. I really hope the Mouse eats this ass holes lunch.

Well that’s enough bitching, thanks again for suffering though my rant.

-

Solar punk II

Dwain Northey (Gen X)

Hempcrete and the Architecture of a Solarpunk Future

One of the most intriguing building materials emerging in discussions about a solarpunk future is hempcrete. While gleaming solar panels, vertical gardens, and renewable energy systems often dominate visions of sustainable cities, the materials used to construct those communities may be just as important. If humanity hopes to build a future that is both technologically advanced and environmentally responsible, then we must rethink not only how we power our buildings but also what those buildings are made from. Hempcrete offers a compelling glimpse into that possibility.

Hempcrete is a biocomposite material made from the woody inner core of the hemp plant, known as hemp hurd, mixed with a lime-based binder and water. The result is a lightweight material that can be formed into walls, insulation panels, or building blocks. Unlike traditional concrete, hempcrete is not typically used as a structural material. Instead, it is used alongside timber, steel, or other framing systems to create highly insulated, breathable walls.

What makes hempcrete particularly attractive in a solarpunk future is its relationship with the environment. Conventional construction materials often come with significant ecological costs. Cement production alone is responsible for a substantial portion of global carbon emissions, while steel manufacturing requires enormous amounts of energy. Modern construction frequently extracts resources from the earth, consumes vast amounts of fossil fuel energy, and leaves behind structures that are difficult to recycle.

Hempcrete represents a different philosophy. Hemp grows rapidly, often reaching maturity within a few months. During its growth cycle, the plant absorbs carbon dioxide from the atmosphere through photosynthesis. When the hemp is harvested and incorporated into building materials, much of that carbon remains locked within the walls of the structure. Combined with the carbon-absorbing properties of the lime binder, hempcrete buildings can potentially become carbon-negative, meaning they store more carbon than was emitted during their production.

This concept aligns perfectly with the core values of solarpunk. Rather than merely reducing environmental damage, solarpunk seeks systems that actively improve ecological health. The goal is not simply to be less destructive but to become regenerative. Hempcrete embodies that principle by transforming buildings from sources of carbon emissions into long-term carbon storage systems.

The advantages extend beyond carbon sequestration. Hempcrete provides excellent thermal insulation, helping buildings remain cooler in summer and warmer in winter. This reduces the need for energy-intensive heating and cooling systems. In a solarpunk city powered by renewable energy, every watt saved through efficient building design reduces strain on the electrical grid and makes communities more resilient.

Hempcrete is also naturally breathable. Traditional construction methods often create airtight structures that can trap moisture and encourage mold growth. Hempcrete walls allow moisture to move through the material without causing damage, helping regulate indoor humidity levels and creating healthier living environments. This characteristic reflects another recurring solarpunk theme: designing buildings that work with natural processes rather than constantly fighting against them.

Durability is another surprising benefit. While some people hear the word “hemp” and imagine something fragile or temporary, hempcrete structures can last for decades. The lime binder continues to cure over time, gradually strengthening the material. Hempcrete is also resistant to pests, fire, and rot, reducing maintenance requirements and extending the lifespan of buildings.

In a broader economic sense, hempcrete could help decentralize construction supply chains. Hemp can be cultivated in many different regions, allowing communities to produce a significant portion of their building materials locally. A solarpunk future often emphasizes local production, regional self-sufficiency, and resilient economies rather than dependence on distant industrial centers. Farmers could become suppliers not only of food but also of sustainable building materials, creating new economic opportunities while reducing transportation emissions.

Of course, hempcrete is not a miracle solution. It cannot replace every conventional building material, and scaling production would require investment, regulatory adaptation, and agricultural expansion. There are also challenges involving building codes, manufacturing infrastructure, and public familiarity with the material. Yet many transformative technologies begin with precisely these kinds of obstacles.

The significance of hempcrete lies not merely in its practical benefits but in what it symbolizes. For more than a century, industrial development has often treated nature as a resource to be extracted and consumed. Hempcrete suggests an alternative path—one in which buildings emerge from renewable biological systems, store atmospheric carbon, and integrate more harmoniously with the ecosystems around them.

In the solarpunk imagination, cities are not sterile landscapes of steel and concrete standing apart from nature. They are living environments where architecture, agriculture, technology, and ecology are woven together into a coherent whole. Hempcrete is a small but meaningful step toward that vision. It demonstrates that the materials of the future do not necessarily have to be more synthetic, more energy-intensive, or more disconnected from the natural world. Instead, they may come from fields of rapidly growing plants, transformed through thoughtful engineering into homes, schools, and communities that help heal the planet even as they shelter the people who inhabit them.

If solar panels provide the energy of a solarpunk future, hempcrete may help provide its walls. Together they represent a future in which human progress is measured not by how much nature we consume, but by how effectively we learn to build alongside it.

-

Let it Go

Dwain Northey (Gen X)

The Things We Carry That Were Never Ours to Carry

We live in a world that seems determined to keep us stressed twenty-four hours a day, seven days a week. The news never stops. Social media never stops. Political arguments never stop. Economic worries never stop. There is always another crisis, another outrage, another prediction of doom waiting for us the moment we pick up our phones.

The problem is that our minds and bodies were never designed to live under a constant state of alarm.

Stress is not just an emotional burden. It raises blood pressure. It disrupts sleep. It affects digestion. It contributes to anxiety, depression, headaches, and a long list of other health problems. Yet many of us spend enormous amounts of energy worrying about things that are completely beyond our ability to influence.

At some point, for the sake of our own health, we have to become selective about what deserves our emotional investment.

Over the years, I have developed two simple questions that help me determine whether something is worth carrying around in my head.

The first is: Is there any action I can take right now that will make this situation better or change the outcome?

If the answer is yes, then perhaps the stress is serving a purpose. Maybe there is a phone call to make, a problem to solve, a conversation to have, or a task to complete. Action can be productive.

But if the answer is no, then what exactly is the stress accomplishing?

If I cannot fix the problem, influence the outcome, or take meaningful action, then all I am doing is sacrificing my own peace of mind. I am paying an emotional price for something over which I have no control.

The second question is even simpler:

If this doesn’t turn out the way I hope, will my day be different tomorrow?

Sometimes the answer is yes. Some situations genuinely matter. They affect our families, our livelihoods, our health, or our futures.

But many things fail this test.

Take a child’s music recital.

Of course you want them to do well. Of course you want them to enjoy themselves and feel successful. But if they miss a note, forget a line, or have a rough performance, what happens tomorrow?

Nothing catastrophic.

They learn. They grow. They gain experience. They discover that mistakes are survivable.

In fact, those moments are often more valuable than flawless performances. The recital belongs to them, not to the parent sitting nervously in the audience treating every note like a life-or-death event.

The same principle applies to countless frustrations we encounter every day.

A stranger cuts you off in traffic.

Someone honks.

Someone flips you off.

Someone is rude.

So what?

Why should a ten-second interaction with a person you will likely never see again have the power to ruin an entire day?

That person is carrying their own baggage, their own frustrations, their own problems. Their behavior belongs to them. It doesn’t have to become yours.

Yet so often we allow these brief encounters to occupy hours of mental real estate. We replay them in our minds, relive them, and give them importance they never deserved.

Perhaps the greatest source of stress today comes from events happening far beyond our immediate lives.

Wars.

Political conflicts.

Economic uncertainty.

Global crises.

These are real issues, and it is natural to care about them. Being informed and engaged is part of being a responsible citizen.

If attending a protest helps you feel heard, go.

If writing letters to elected officials helps advance a cause you believe in, do it.

If volunteering, donating, or organizing creates positive change, participate.

But it is also important to recognize the limits of your individual control.

You can contribute your voice.

You can contribute your effort.

You can contribute your vote.

What you cannot do is personally carry the weight of the entire world on your shoulders.

Many people spend hours every day consuming bad news and absorbing anxiety from events they have no realistic ability to influence. They mistake worrying for action. They mistake emotional suffering for engagement.

But stress itself is not a contribution.

Stress is not a solution.

Stress is not a strategy.

Holding onto that anxiety day after day does not change the outcome of a war, an election, an economic trend, or an international dispute. It only changes what is happening inside your own body.

It raises your blood pressure.

It steals your sleep.

It drains your energy.

It shortens your patience with the people who actually matter in your life.

There is a difference between caring and carrying.

Caring means paying attention, staying informed, and acting where you can.

Carrying means hauling around emotional weight that was never yours to bear.

The older I get, the more I realize that peace of mind is not achieved by pretending problems don’t exist. It comes from honestly recognizing which problems belong to us and which do not.

Not every battle requires our participation.

Not every insult requires a response.

Not every setback requires panic.

Not every crisis requires us to sacrifice our own well-being.

Sometimes the healthiest thing we can say is, “I wish this were different, but I cannot fix it.”

And once we accept that truth, we can put down burdens that were never helping anyone in the first place.

In a world constantly demanding our attention, our outrage, and our anxiety, perhaps one of the most important forms of self-care is learning to reserve our stress for the things we can actually influence and the people we can actually help.

Everything else is just weight we were never meant to carry.

-

Solarpunk

Dwain Northey (Gen X)

Solarpunk: Imagining a Future Worth Building

For decades, much of our vision of the future has been dominated by dystopia. Popular culture has given us endless versions of cyberpunk cities: towering corporate skyscrapers, polluted skies, neon lights reflected in rain-soaked streets, and populations trapped beneath systems they can neither control nor escape. These stories are compelling because they take many of today’s problems—economic inequality, environmental destruction, and unchecked technology—and project them into a darker tomorrow.

Solarpunk asks a different question.

Instead of asking what happens if everything continues to get worse, solarpunk asks what happens if humanity actually learns from its mistakes.

At its core, solarpunk is a vision of a future where technological advancement and ecological stewardship are not enemies but partners. It imagines cities covered with gardens, buildings designed to generate their own energy, transportation systems that are efficient and clean, and economies that measure success not merely by profit but by sustainability and quality of life.

Unlike the grim aesthetics of cyberpunk, solarpunk is filled with sunlight, greenery, and community. Yet it is not naïve optimism. Solarpunk does not pretend that environmental challenges disappear through wishful thinking. Rather, it proposes that innovation can be directed toward solving problems instead of simply maximizing short-term gains.

One of the defining characteristics of solarpunk is its relationship with industry. Traditional industrialization often relied upon the assumption that nature existed primarily as a resource to be extracted. Forests became lumber, rivers became waste channels, and the atmosphere became a dumping ground for emissions. Solarpunk envisions industries that operate according to a different philosophy: that long-term prosperity depends upon maintaining the ecological systems that support human life.

In a solarpunk future, manufacturing would prioritize renewable materials, recyclable products, and production methods designed to minimize waste. Factories might run on solar, wind, geothermal, or other renewable energy sources. Supply chains would be structured around efficiency rather than excess, reducing unnecessary transportation and resource consumption.

Architecture would undergo a similar transformation. Instead of constructing buildings that merely occupy space, cities would be filled with structures that actively contribute to their environments. Rooftops would generate electricity. Walls could support vertical gardens. Water collection systems would reduce waste while helping communities adapt to changing climates. Urban spaces would blend natural and human-designed environments rather than forcing a rigid separation between them.

Agriculture, too, would reflect this philosophy. Rather than relying exclusively on industrial-scale farming practices that exhaust soil and consume enormous quantities of water, solarpunk envisions regenerative agriculture, urban gardens, and technologies that increase food production while reducing environmental impact. Communities would have stronger connections to the systems that produce their food, making economies more resilient and less dependent on fragile global supply chains.

Perhaps most importantly, solarpunk reimagines economics itself.

Modern economic systems often reward activities that create immediate profit even when they impose long-term costs on society. Pollution, habitat destruction, and resource depletion can generate financial gains in the short run while creating expenses that future generations must bear. Solarpunk proposes that truly successful economies would account for those costs and prioritize investments that create lasting value.

Under such a model, economic growth would not be measured solely by how much is produced or consumed. Success would also be measured by cleaner air, healthier ecosystems, improved public health, stronger communities, and greater resilience against environmental challenges. Innovation would remain important, but innovation would be evaluated by how effectively it improves both human well-being and environmental sustainability.

Critics sometimes dismiss visions like solarpunk as unrealistic. Yet many of the technologies that define the movement already exist. Solar panels continue to become more efficient. Battery storage improves each year. Green building techniques are increasingly common. Advances in recycling, water conservation, and sustainable agriculture are being implemented around the world. The challenge is not whether these technologies are possible; it is whether societies choose to prioritize them.

What makes solarpunk particularly compelling is that it offers something increasingly rare in discussions about the future: hope grounded in practicality. It does not require magical inventions or a complete rejection of modern technology. Instead, it asks humanity to direct its creativity toward building systems that work with nature rather than against it.

The future envisioned by solarpunk is not one without industry, technology, or economic development. It is a future where those forces are aligned with ecological health and human flourishing. It suggests that progress does not have to leave polluted rivers, poisoned air, and exhausted landscapes in its wake. Progress can be measured by how well civilization sustains itself and the world around it.

In that sense, solarpunk is more than an artistic aesthetic or literary genre. It is a philosophy that challenges us to imagine a future where advancement and sustainability are not competing goals but the same goal viewed from different angles. At a time when environmental concerns often inspire anxiety and pessimism, solarpunk offers a simple but powerful proposition: the future can be greener, cleaner, and more prosperous if we choose to build it that way.

-

Ego and the Need to Be Seen

Dwain Northey (Gen X)

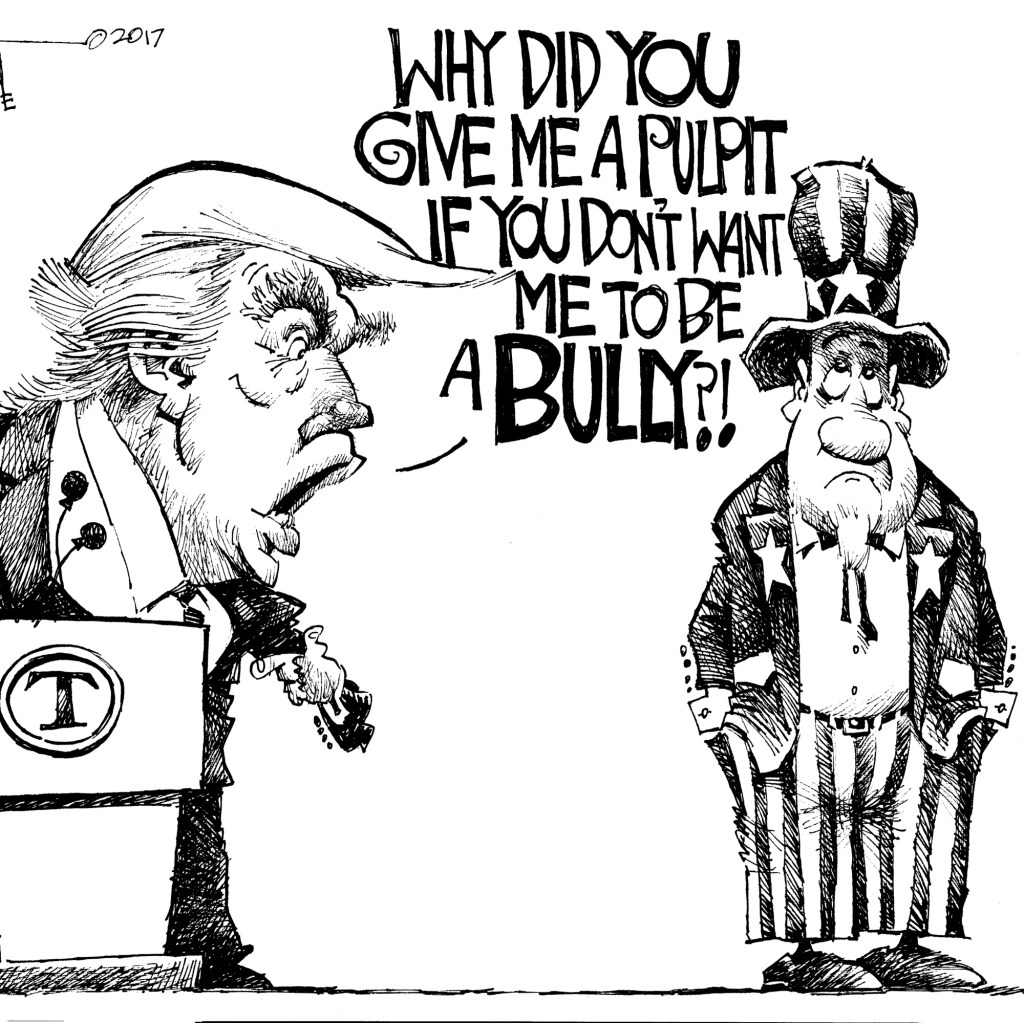

There was a time when presidents understood that not every event was about them.

If your hometown team made it to the championship, you could certainly celebrate. You could congratulate them. You could invite them to the White House afterward. What you generally did not do was insert yourself directly into the middle of the event itself and become part of the story.

Yet here we are.

With New York’s basketball team reaching the Finals, Donald Trump suddenly wants to attend Game Three. Now, to be fair, he’s a New Yorker. Nobody is suggesting he isn’t allowed to enjoy sports or root for a hometown team. The issue isn’t whether he likes basketball. The issue is that the President of the United States cannot simply show up anywhere without fundamentally changing the environment around him.

A sitting president attending a championship game isn’t like an ordinary celebrity buying a ticket. It means road closures, security sweeps, restricted access, Secret Service checkpoints, altered schedules, and thousands of fans dealing with inconveniences they otherwise wouldn’t face. The event immediately becomes partially about the president rather than solely about the athletes and the fans who spent decades waiting for moments like this.

And that’s the difference.

Most presidents understood that there are occasions when the spotlight belongs somewhere else.

If the Chicago Bulls had somehow reached the NBA Finals during Barack Obama’s presidency, nobody seriously believes he would have decided that Game Seven was the perfect venue for a presidential appearance. He understood that the story was the team. The players. The fans. The city.

The same principle has applied throughout modern presidential history. Presidents have attended sporting events, certainly. But they generally recognized that championship moments belong to the athletes competing and the communities celebrating.

Donald Trump has always operated differently.

For him, every event appears to be evaluated through the same question: “How can I become part of the headline?”

A military parade becomes about him.

A disaster response becomes about him.

A diplomatic summit becomes about him.

A sporting event becomes about him.

The pattern is so familiar that it barely surprises anyone anymore.

The irony is that the fans don’t need him there. Knicks fans—or any team’s fans in this situation—have waited years, sometimes decades, for a chance to watch their team compete for a championship. They aren’t buying tickets because they’re hoping to catch a glimpse of the President. They’re there because they love basketball and because this may be a once-in-a-generation moment for their franchise.

Yet the presence of a president inevitably shifts media coverage, security planning, and public attention away from the court and toward the luxury suite.

The players become a secondary story.

The game becomes a secondary story.

The crowd becomes a secondary story.

The president becomes the story.

That may be unavoidable in some circumstances. The problem arises when the president seems to enjoy that outcome.

There is also an uncomfortable possibility that after all the attention, all the security arrangements, all the disruption, the actual game itself may not be particularly important to him. Trump has developed a reputation for treating sporting events less as competitions to be appreciated and more as stages upon which he can be seen.

One suspects that if you asked many lifelong fans to choose between having their team in the Finals and having the President attend the game, they would choose the Finals every single time without hesitation.

Because that’s what matters.

The players matter.

The coaches matter.

The fans matter.

The championship matters.

What should not matter is whether the most powerful person in the country can find yet another opportunity to place himself at the center of someone else’s moment.

Leadership often requires understanding when to speak and when to remain silent. It requires understanding when to lead and when to let others have their day. Perhaps most importantly, it requires understanding that not every spotlight belongs to you.

For many presidents, that lesson came naturally.

For Donald Trump, it seems to be the one lesson he never learned.

-

The Candidate Who Never

Dwain Northey (Gen X)

Imagine a fictional election cycle.

A candidate runs for office with campaign signs that are simple and direct. Some feature a bright red background with white lettering. Others use a white background with red lettering. Every sign carries the same message:

Military Veteran. Supports a Balanced Budget. Supports Law Enforcement.

That’s it.

No party label. No donkey. No elephant. No mention of Democrat or Republican. Just three statements describing the candidate and his positions.

Every statement is true.

The candidate served in the military. He genuinely supports balanced budgets. He supports police departments and public safety. There are no false claims, no misleading credentials, and no hidden meanings.

Election Day arrives, and he wins.

Then comes the surprise.

The candidate is a Democrat.

Almost immediately, some voters begin claiming they were deceived. They say the candidate was dishonest. They argue that he intentionally misled the public.

But what exactly was the lie?

The signs never claimed he was a Republican.

The signs never claimed he was a conservative.

The signs never mentioned a party affiliation at all.

What happened is not that voters were deceived. What happened is that voters made assumptions.

The red-and-white color scheme probably helped those assumptions along. For years, Americans have been conditioned to associate red with Republicans and blue with Democrats. Many voters would see red campaign signs talking about military service, balanced budgets, and support for law enforcement and automatically conclude they knew what party the candidate belonged to.

The candidate never said it.

The voters said it to themselves.

That distinction matters.

Over the last several decades, American politics has become increasingly tribal. Certain values and issues have been branded so successfully by one party that many people forget those positions are not exclusive to that party.

Military service is assumed to be Republican.

Support for law enforcement is assumed to be Republican.

Balanced budgets are assumed to be Republican.

Yet none of those positions belong exclusively to Republicans.

There are Democrats who have served in the military. There are Democrats who support police departments. There are Democrats who believe government should live within its means.

In fact, the balanced budget issue may be the most revealing assumption of all.

Republicans have spent decades marketing themselves as the party of fiscal responsibility. The phrase “balanced budget” has become part of the brand. But branding and reality are not always the same thing.

If voters looked only at campaign rhetoric, they might conclude Republicans are the only people concerned about deficits and government debt. If they looked at the historical record, they might discover a far more complicated story.

Recent history is filled with examples of Republican politicians campaigning on fiscal restraint while supporting tax cuts, spending increases, or both. At the same time, several Democratic administrations have presided over periods of deficit reduction and, in some cases, budget surpluses.

That does not mean every Democrat is fiscally responsible or every Republican is fiscally reckless. Reality is rarely that simple. But it does challenge the assumption that concern for balanced budgets belongs to only one political party.

The fictional candidate’s sign did not say, “I support a balanced budget because I’m a Republican.”

It simply said he supports a balanced budget.

The voters supplied the rest of the sentence.

What makes this thought experiment interesting is the reaction after the election. Rather than questioning their assumptions, many people would likely direct their anger at the candidate. They would claim the absence of a party label was deceptive.

But is a candidate responsible for assumptions voters make on their own?

If a restaurant advertises that it serves steak, customers cannot later complain that nobody informed them of the owner’s political affiliation. The information presented was accurate. The assumptions belonged to the customer.

The same principle applies here.

The candidate never lied.

He never hid his military service.

He never hid his support for law enforcement.

He never hid his support for balanced budgets.

The only thing he didn’t provide was a team jersey.

And perhaps that is why the hypothetical causes such discomfort.

Many Americans claim they vote based on policies, qualifications, and ideas. Yet this scenario suggests that a surprising number of voters may be relying on political branding instead. They see a color. They hear a familiar phrase. They recognize a stereotype. Then they assign a party affiliation before ever examining the candidate himself.

When the election is over and they discover the candidate is a Democrat, they feel betrayed—not because he lied, but because their assumptions turned out to be wrong.

The most revealing question isn’t whether the candidate was deceptive.

The most revealing question is why so many people automatically assumed that military service, support for public safety, and concern about balanced budgets could only belong to one political party.

The candidate never lied.

The assumptions did all the work.

And in today’s political climate, that may be the biggest truth of all.

-

The Easiest Way to Offend Donald Trump Is to Quote Donald Trump

Dwain Northey (Gen X)

One of the strangest features of Donald Trump’s political career is that he often becomes the most defensive when confronted with his own words. You can accuse him of almost anything and he’ll swat it away, but quote something he actually said six months ago and suddenly you’re watching an Olympic-level exercise in verbal gymnastics.

A recent interview provided a perfect example. The interviewer asked him about the fact that he campaigned on a promise of “no new wars,” yet America now finds itself involved in multiple military conflicts. Instead of addressing the substance of the question, Trump immediately shifted into lawyer mode.

“I never promised there would be no new wars.”

Technically, that’s a clever answer. It is also an answer to a question that wasn’t actually asked.

Of course no president can promise there will never be a new war. A president cannot guarantee that another nation won’t attack an ally, launch a missile, invade a neighbor, or create a crisis that demands a response. Nobody expects a president to predict every possible future event.

But that wasn’t the spirit of the campaign message.

When voters heard “no new wars,” they weren’t interpreting it as a magical guarantee that world history would stop happening. They understood it as a commitment that Trump himself would not be the one choosing to expand conflicts, initiate military adventures, or escalate situations that otherwise might have remained contained.

That’s the distinction that should have been pressed.

A better follow-up question might have been:

“Of course you can’t guarantee that some unforeseen event won’t create a military crisis. Nobody expects that. But when you campaigned on avoiding new wars, voters understood that to mean you wouldn’t be the one starting them. Today the United States is involved in more military conflicts than when you took office. Some of those were the direct result of decisions made by your administration. How do you reconcile those actions with the promise you made during the campaign?”

That’s the question that gets to the heart of the issue.

Because what Trump often does is argue against the literal wording of a criticism while ignoring the obvious meaning behind it. It’s a debating technique that works remarkably well in modern politics. If someone says, “You promised X,” he responds by finding a technicality that allows him to claim he never literally said X in precisely those words. His supporters hear a rebuttal. His critics hear an evasion. The actual substance disappears into the fog.

The pattern repeats itself constantly.

When challenged about spending, he talks about revenue.

When challenged about deficits, he talks about growth.

When challenged about statements he made on video, he argues about the interpretation rather than the statement itself.

The discussion shifts from what happened to whether the wording of the criticism was perfect.

It’s like arguing with someone who was caught speeding and responds by saying, “Well, technically the officer said I was driving fast, and speed is a relative term.”

Maybe. But everyone knows what the conversation is really about.

Another telltale sign of Trump’s approach to criticism is what happens when there is no room for interpretation at all.

Sometimes the challenge isn’t based on a newspaper article or an anonymous source. Sometimes the evidence is literally his own words.

An interviewer can say, “This is your tweet.”

Or, “This is your Truth Social post.”

Or, “This is video of you answering a question from the press corps.”

At that point there isn’t much room to argue that the media took him out of context. The source isn’t a hostile journalist. The source is Donald Trump himself.

Yet rather than addressing the substance of what he said, the conversation often takes a familiar turn.

The interviewer becomes the problem.

Suddenly the response isn’t, “Here’s why I changed my position.”

It isn’t, “The facts on the ground changed.”

It isn’t even, “I was wrong.”

Instead, it becomes, “You’re a nasty person.”

“You’re terrible at your job.”

“You don’t know what you’re doing.”

The criticism shifts from the statement to the person asking about the statement.

It’s a remarkable political magic trick. The evidence can be a direct quote, a social media post, or a video recording, but somehow the controversy becomes the character of the person holding up the mirror rather than the reflection staring back from it.

Imagine any other profession operating this way.

A CEO is shown a memo he wrote and responds by attacking the employee who brought it to the meeting.

A quarterback watches game film of a bad throw and responds by insulting the cameraman.

A contractor is shown the blueprint he signed and decides the architect is a terrible person for asking why the wall is crooked.

Most people would recognize that as avoiding accountability. In politics, however, it often becomes part of the show.

Trump’s political gift has always been his ability to recognize these escape hatches faster than his opponents. His weakness is that he often seems genuinely offended that anyone would hold him accountable for the expectations he created in the first place.

The easiest way to provoke Donald Trump isn’t to invent something about him. It’s to remind people of what he actually said.

And the moment you do, the conversation often stops being about the original promise and becomes a debate over definitions, wording, technicalities, semantics, or the motives of the person asking the question.

The evidence can be his tweet. His post. His speech. His interview. His video.

Yet somehow the real offense isn’t what he said.

The real offense is having the audacity to remember it.

The circle gets squared not by answering the question, but by changing what the question means. If that doesn’t work, then the focus shifts to attacking the person who dared ask it.

For supporters, that’s effective political combat.

For critics, it’s exhausting.

For everyone else, it’s another reminder that in modern politics, the hardest thing in the world is getting a straight answer to a simple question.

-

Donald Trump, Independence Day, and the War He Imagined

Dwain Northey (Gen X)

There is an old saying that when you find yourself in a hole, the first thing you should do is stop digging. Donald Trump apparently heard that advice and responded by ordering a larger shovel.

We now find ourselves watching another chapter in an unnecessary and constitutionally questionable military adventure with Iran, a conflict that seems to have been launched without a clear endgame and with goals that appear remarkably similar to what was available before the first shot was fired.

That is the part that should make everyone pause. The demands being made today are, in many cases, the same demands that could have been pursued through diplomacy a hundred days ago. After all the threats, chest-thumping, airstrikes, press conferences, and declarations of strength, we seem to have arrived right back where we started.

At some point you have to wonder if Iran is sitting across the table trying not to laugh.

The situation increasingly resembles a businessman setting fire to his own office and then demanding praise because he found a bucket of water.

The problem is that Donald Trump has always viewed himself as the hero of every movie playing inside his head. Somewhere in that imagination, dramatic music is swelling. Fighter jets are roaring overhead. Advisors are looking nervous. The world is hanging by a thread, and only one man can save it.

Unfortunately, reality is not a Hollywood screenplay.

Trump appears to see himself as the president from Independence Day, standing before humanity, delivering the inspirational speech that unites the world against an existential threat. In his mind, he is both the president and the action hero. He’s the commander-in-chief, the ace pilot, the strategist, and probably the guy who gets the girl before the credits roll.

The problem is that Independence Day involved giant alien spaceships attacking Earth. Reality involves complicated geopolitics, alliances, economic consequences, military casualties, and the inconvenient fact that other countries are not obligated to participate in your fantasy.

History is filled with leaders who convinced themselves that they alone could bend events to their will. History is also filled with examples of how badly that tends to end.

What makes this episode particularly bizarre is that the administration continues to present every development as evidence of success, even when success increasingly resembles returning to the exact position that existed before the conflict began. It’s like crashing your car into a tree and demanding applause because you’ve successfully located the road again.

Meanwhile, Americans are left paying the bill, military families are left carrying the burden, and the rest of the world is left trying to determine whether this is a coherent strategy or simply another season of reality television masquerading as foreign policy.

The fantasy remains unchanged. Trump still imagines himself soaring through the skies, saving civilization while grateful crowds cheer below. But the real world has a nasty habit of refusing to follow the script.

The aliens aren’t coming. Will Smith isn’t flying cover. The soundtrack isn’t swelling. And no amount of wishful thinking can transform a self-created crisis into a heroic rescue mission.

In the end, the greatest obstacle to Donald Trump’s Independence Day fantasy may be the simple fact that reality keeps showing up and ruining the movie.

-

Learning to Let Go

Dwain Northey (Gen X)

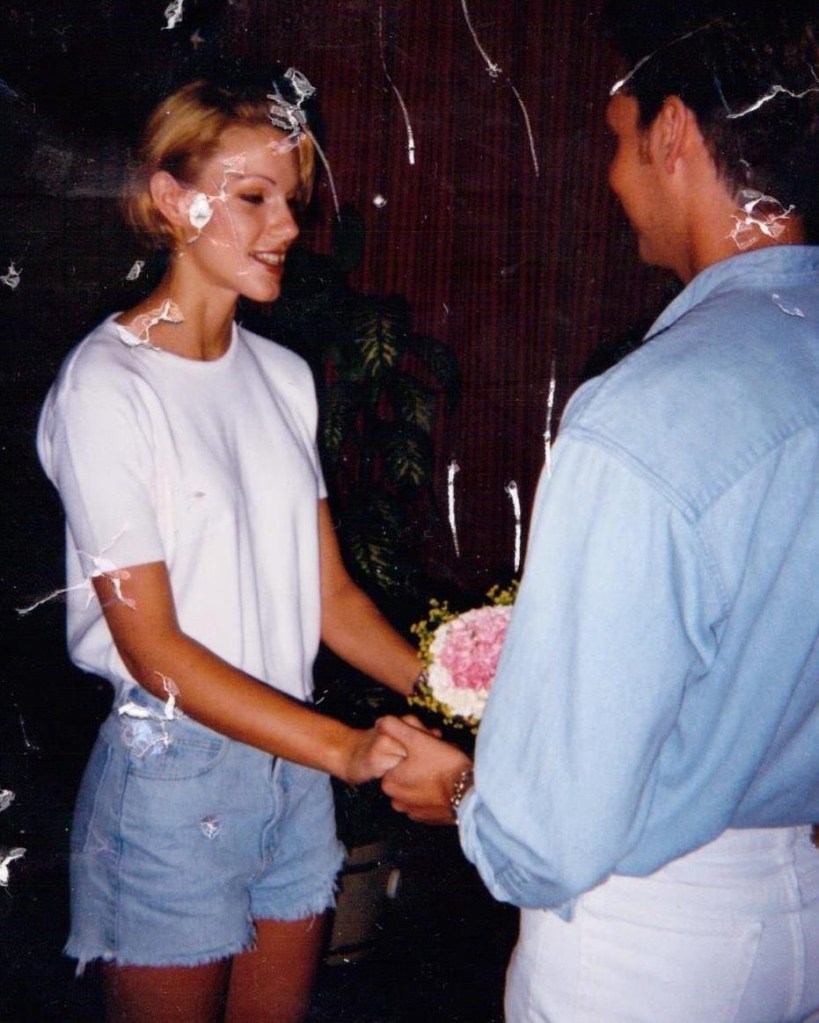

Thirty years ago today, in a small civil ceremony in Bowie, Maryland, I married the person I believed I would spend the rest of my life with.

There was no grand cathedral, no elaborate production, no television cameras, and no audience beyond a handful of people who mattered. Just two people standing in front of a judge, making promises that seemed permanent. At the time, I never imagined I would one day be sitting here, three decades later, staring at a photograph from that day and wondering how a future that seemed so certain could disappear.

The strange thing about memories is that they don’t age the way people do.

The people in that photograph are frozen in time. They don’t know what is coming. They don’t know about the years ahead, the victories and failures, the laughter and arguments, the moments that would bring them closer together and the moments that would eventually drive them apart. They are still standing there, smiling, believing they have solved the mystery of life.

I envy them sometimes.

Thirteen years ago, she decided that the life we had built together was no longer the life she wanted. That is not a criticism. It is simply a fact. People change. Priorities change. Dreams change. Sometimes two people who once walked the same path discover they are heading in different directions.

Since then, she has built a new life. She remarried years ago. She has two more children. By every outward measure, she moved forward. The story continued.

Meanwhile, every year when this date rolls around, I find myself returning to that photograph and asking the same question I have asked countless times before:

What happened?

The frustrating part is that after all these years, I still don’t have an answer.

There was no single dramatic moment that explains everything. No smoking gun. No revelation that suddenly makes the ending make sense. Life is rarely that neat. Relationships are not mathematical equations where you can plug in the variables and arrive at a definitive solution.

Instead, there are fragments. Conversations half remembered. Mistakes made by both people. Opportunities missed. Small cracks that seemed insignificant at the time but eventually became impossible to ignore.

And yet none of those fragments fully answers the question.

For a long time, I thought if I just analyzed the past carefully enough, I would eventually discover the missing piece. There would be a moment of clarity when everything suddenly fit together.

That moment never came.

What I am slowly beginning to understand is that maybe the answer is not hidden somewhere in the past waiting to be discovered. Maybe there isn’t an answer that would satisfy me even if I found it.

Maybe some chapters of our lives end without providing the closure we desperately want.

That is a difficult lesson for me because I have always believed problems can be solved. Questions can be answered. Mysteries can be unraveled.

But relationships are different.

Sometimes people leave.

Sometimes love changes shape.

Sometimes two people tell each other forever and genuinely mean it at the time, only to discover later that forever turned out to be much shorter than they expected.

The hardest part is not losing the marriage. The hardest part is letting go of the search for an explanation.

Because as long as I keep asking what happened, some part of me is still standing in that courtroom thirty years ago, refusing to leave. Some part of me is still trying to rewrite a story whose ending was decided long ago.

The photograph cannot answer my questions.

Neither can the years.

Neither can she.

The answer I have been chasing for thirteen years may simply not exist.

And perhaps that is where letting go begins.

Not with forgetting.

Not with pretending those years never mattered.

Not with denying that I still feel sadness when I look at that picture.

Letting go means accepting that some of the most important events in our lives will never fully make sense.

It means honoring the memories without becoming trapped inside them.

It means recognizing that the young man standing in that photograph was not foolish for believing in forever. He was hopeful. He was in love. He was doing the best he could with the future he imagined.

I don’t need to judge him.

I don’t need to rescue him.

I don’t need to solve the mystery for him.

Maybe after thirty years, the lesson is not figuring out what happened.

Maybe the lesson is accepting that it happened.

And then allowing myself, finally, to keep walking forward.

-

Russia: Superpower or Historical Accident?

Dwain Northey (Gen X)

History is full of strange “what if” questions. What if Napoleon had won at Waterloo? What if the South had won the Civil War? What if someone had told the passengers of the Titanic that maybe an iceberg at full speed wasn’t a great idea?

One of my favorites is this: What if Russia had never played the role it did in World War I and World War II? Would it be the global power everyone treats it as today, or would it simply be another large country most people couldn’t find on a map?

Before the twentieth century, Russia was certainly big. It had a lot of land. It had a lot of people. It had a lot of winters. What it didn’t have was the kind of global influence we associate with great powers today. It was ruled by Czars who often seemed more interested in maintaining absolute power than modernizing the country. Industrialization lagged behind Western Europe. Political institutions were archaic. The economy was largely agricultural. In many ways, Russia was less a modern power than a giant empire held together by geography and force.

Then came World War I.

Russia entered the conflict as one of Europe’s major empires, but the war exposed just how fragile the country really was. Millions of soldiers were thrown into battle with inadequate equipment, poor leadership, and staggering casualties. The war ultimately helped bring down the Romanov dynasty and paved the way for the Bolshevik Revolution. Russia didn’t emerge from World War I stronger. It emerged transformed.

Then came World War II, where the Soviet Union paid a price that is almost impossible to comprehend today. Entire cities were destroyed. Tens of millions died. The Eastern Front became history’s largest and bloodiest meat grinder. Soviet leaders demonstrated a willingness to absorb losses that would have broken virtually any other nation on Earth. Whether one sees that as resilience, brutality, or some combination of both, it undeniably altered the course of the war.

At the same time, Franklin Roosevelt made the strategic decision that defeating Nazi Germany required cooperation with the Soviet Union. The alliance between the United States, Britain, and the USSR was never based on friendship. It was based on necessity. Yet that alliance had enormous consequences. By war’s end, the Soviet Union occupied much of Eastern Europe and emerged as one of two global superpowers.

The Cold War cemented that status. For nearly half a century, the world was organized around the rivalry between Washington and Moscow. Nuclear arsenals, proxy wars, espionage, and ideological competition gave the Soviet Union influence far beyond what its economy alone might have justified.

Which brings us to today.

Modern Russia still occupies an enormous amount of territory. It possesses vast natural resources and one of the world’s largest nuclear arsenals. Yet economically, it struggles to match countries that occupy a fraction of its landmass. Its economy is frequently compared to those of medium-sized European nations despite spanning eleven time zones.

This raises an uncomfortable question. If Russia had never emerged from World War II as one of the victorious powers and if the Cold War had never elevated it into superpower status, would the world view it much differently than it views other large but economically middling countries?

The answer may be yes.

Much of Russia’s modern influence rests on foundations built during the twentieth century. Its permanent seat on the United Nations Security Council, its nuclear arsenal, its military prestige, and much of its geopolitical relevance stem directly from the outcome of World War II and the Cold War that followed.

Without those events, Russia might still be large. It might still be resource-rich. It might still be important regionally. But it is difficult to imagine it commanding the same level of global attention.

In a sense, Russia’s story demonstrates that geography alone does not create power. Land helps. Resources help. Population helps. But historical circumstances matter just as much. The Soviet Union’s sacrifices during World War II and the geopolitical realities that followed transformed Russia from a struggling empire into one of the defining powers of the modern age.

Whether that status can be maintained in the twenty-first century is another question entirely.

Because eventually every nation discovers that memories of past victories can only carry you so far. At some point, significance has to come from what you are now, not simply what your grandparents accomplished eighty years ago.

-

Reflecting Pools for People Who Don’t Understand Reflection

Dwain Northey (Gen X)

It should surprise absolutely no one that a cut-rate hotel owner would decide to paint a reflecting pool bright blue and somehow believe it would improve the reflection. This is the same level of thinking that gives us gold-plated toilets, giant names on buildings, and the belief that every problem can be solved by making it louder.

A reflecting pool exists for one purpose: reflection. The clue is literally in the name.

The entire concept is based on creating a calm, mirror-like surface that captures the sky, surrounding architecture, trees, or whatever happens to be around it. That’s why reflecting pools traditionally have dark or neutral bottoms. They are designed to disappear visually so your eye focuses on the reflected image rather than what’s underneath the water.

Paint the bottom bright blue, however, and congratulations—you’ve created a swimming pool.

That’s it. You haven’t enhanced the reflection. You’ve made the water announce its presence. Instead of seeing the sky mirrored on the surface, your eye now notices the giant blue object sitting underneath it. It’s the aquatic equivalent of hanging a neon sign in front of a window and wondering why you can’t see outside.

Light isn’t complicated. Water reflects light at the surface. The color beneath the water affects what your eyes perceive. A darker, neutral bottom tends to disappear. A bright blue bottom screams, “LOOK AT ME!” and competes with the reflection.

Nobody looks at a hotel pool and says, “Wow, what a magnificent reflecting pool.” They look at it and think about sunscreen, screaming children, and whether the swim-up bar is open.

The irony is that some of the most beautiful reflections in the world come from places that almost vanish into the background. Quiet lakes. Calm ponds. Infinity pools with neutral gray or dark bottoms that blend into the horizon. The whole point is to remove distractions.

But that requires understanding that not everything has to be painted a brighter color to work better.

Then again, we’re talking about the design philosophy that brought us casinos that look like wedding cakes, penthouses decorated like Roman emperors won the lottery, and buildings where subtlety was declared an enemy of the state.

So no, nobody should be surprised.

A reflecting pool painted blue is exactly the kind of idea that sounds brilliant if your entire understanding of architecture comes from staring out the window of a budget hotel and thinking, “You know what this needs? More blue.”

The pool reflects the world above it. The color underneath should be almost invisible. That’s how reflection works.

Of course, understanding reflection requires someone to grasp the concept that the world doesn’t revolve around whatever color paint happened to be on sale this week.

You must be logged in to post a comment.